[EN] What an AI Center of Excellence (AI CoE) Is — and Isn’t

Feb 18, 2026

- What problem should an AI CoE actually solve for your organization?

- Who must own decisions vs. who should execute them inside an AI CoE?

- How do you stop pilots accumulating and start delivering production value?

In a mid-size European bank, two teams built promising models. One team treated each model as an isolated project: separate data plumbing, separate configuration, and no clear handoff to operations. The other team agreed a simple shared canvas: common intake, a single RACI for approvals, and definitions of readiness and done. When a regulator asked about model governance, the first team scrambled; the second produced a concise audit trail and a deployment plan. The turning point was not technology — it was the operating model. Leadership aligned on a lightweight center to coordinate ownership, reduce duplication, and set minimum governance. The lesson: an AI CoE is about enabling consistent decisions, handoffs, and repeatable delivery — not building every model centrally.

Key Takeaways:

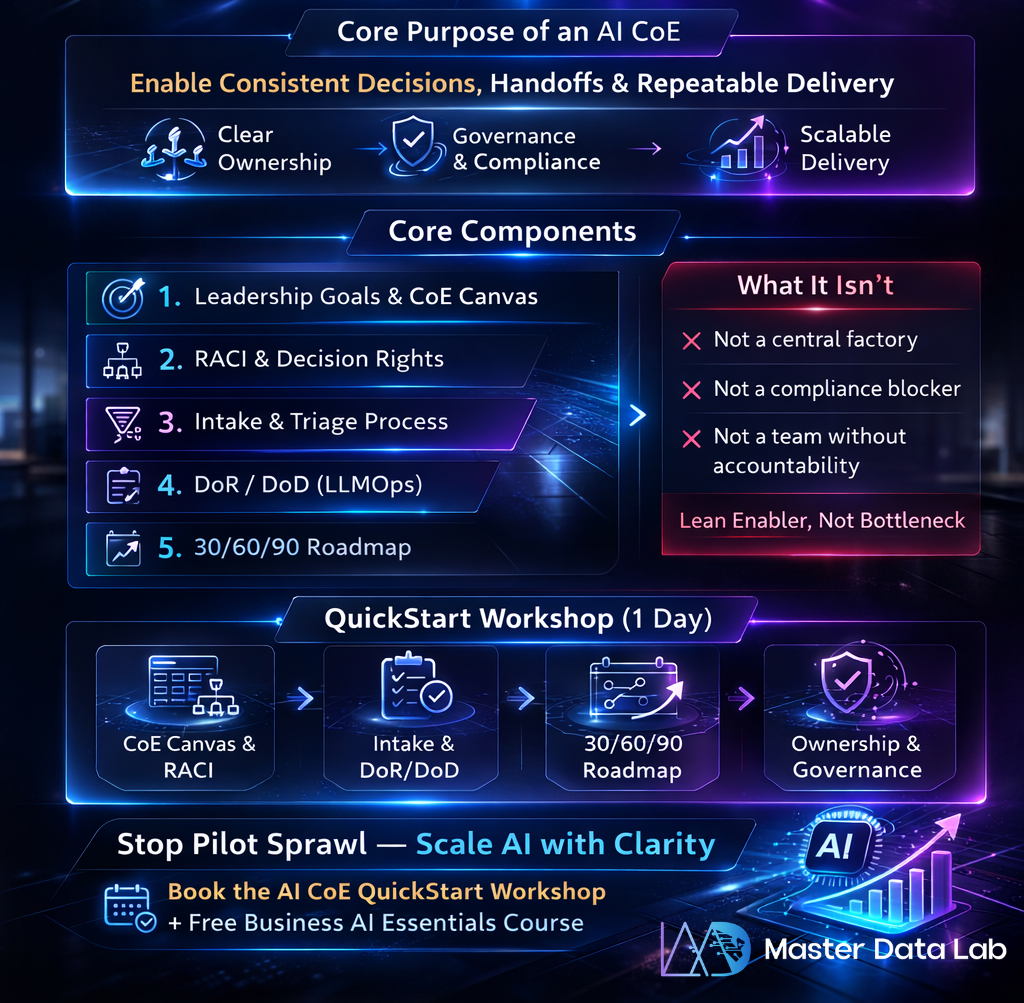

- An AI CoE is an operating layer that reduces friction between strategy, engineering, and operations.

- It defines the rules of engagement (intake, RACI, DoR/DoD) rather than centralizing all delivery.

- Quick alignment (a one-day QuickStart Workshop) accelerates clarity and reduces pilot sprawl.

What an AI CoE is

— a practical definition An AI Center of Excellence (AI CoE) is a cross-functional operating model that standardizes how AI projects are proposed, approved, built, and maintained. It focuses on three outcomes: clear ownership, predictable delivery, and minimum viable governance. The CoE provides common artifacts (intake forms, CoE Canvas, RACI), best-practice playbooks (LLMOps checks, DoR/DoD), and a light governance layer to ensure compliance and reproducibility. It is an enabler, not a delivery monopoly.

What an AI CoE isn’t

- Not a factory that centralizes all model development.

- Not a compliance police force that blocks innovation.

- Not a permanent team that removes accountability from product and engineering owners. Keep the CoE lean: it should create standards, review high-risk projects, and help teams adopt repeatable patterns.

Core components and a simple framework Use a compact framework to define your CoE responsibilities and artifacts. Here is a recommended 6-step framework for a QuickStart alignment:

- Align leadership goals — agree success criteria for the CoE.

- Define the CoE Canvas — roles, services, intake, and KPIs.

- Establish RACI for decisions — who approves models, who deploys, who operates.

- Create intake + triage — a lightweight form to evaluate risk, data readiness, and value.

- Define DoR (Definition of Ready) and DoD (Definition of Done) for LLMOps and model delivery.

- Build a 30/60/90 roadmap — prioritize 3 near-term initiatives and their owners.

Practical examples

- Intake example: a 5-question form that captures business objective, data owner, expected output, compliance flags, and target SLA.

- RACI example: Product owner (A), Data Engineering (R), ML Engineers (R), CoE (C), Legal/Compliance (I).

- DoR/DoD: DoR includes data availability and access; DoD includes monitoring setup and rollback plan.

Common failure patterns

- Pilot hoarding: dozens of pilots with no path to production.

- Centralization trap: CoE becomes a delivery bottleneck.

- Lack of decision rights: teams wait on approvals and lose momentum.

- Missing intake discipline: projects start without data contracts or owners.

Checklist — readiness to launch an AI CoE

- Leadership sponsorship and agreed success criteria.

- A one-page CoE Canvas describing scope and services.

- RACI for core decisions and approvals.

- A lightweight intake form and triage process.

- DoR and DoD templates for LLMOps/model delivery.

- 30/60/90 roadmap with named owners.

- A communication plan and training offer for teams.

Guardrails & Human-in-the-loop with medium risk environments, implement guardrails that require human oversight for high-impact decisions and audit trails for model behavior. Follow public guidance for risk management and responsible AI: align processes with NIST risk-management concepts and Microsoft/AWS/Google responsible AI practices to define review gates and documentation expectations. Use human-in-the-loop patterns for sensitive outcomes (approval, overrides, incident handling).

What you get in AI CoE QuickStart Workshop (1 day) — align leadership define CoE Canvas + RACI + intake + 30/60/90 roadmap:

- Executive alignment on CoE mission and success criteria

- Draft CoE Canvas tailored to your organisation

- RACI matrix for model ownership and approvals

- Intake and triage template (DoR sample)

- Draft 30/60/90 roadmap with prioritized initiatives and owners

If your organisation is stuck in pilot mode, a one-day AI CoE QuickStart Workshop can create the clarity you need: CoE Canvas, RACI, intake, and a 30/60/90 roadmap to reduce backlog friction and accelerate production value.

Book the QuickStart Workshop and pair it with our Business AI Essentials free course to equip teams for the next steps.

FAQ:

Do we need a CoE if we already have a data team?

CoE complements data teams by providing the operating model and decision framework that enables repeatable AI delivery and governance.

Should the CoE own all AI development?

No. The CoE should enable teams with standards, tooling, and reviews while leaving delivery to product and engineering owners.

How quickly can we get value?

With focused alignment and a QuickStart workshop, leadership clarity and an initial roadmap can be achieved in a day; execution follows in sprint cycles.

References:

- NIST — AI Risk Management Framework — nist.gov

- Microsoft — Responsible AI resources — learn.microsoft.com

- AWS — Well-Architected Machine Learning Lens — docs.aws.amazon.com

- Google Cloud — AI and ML documentation — cloud.google.com

- OpenAI — best practices and safety guidance — platform.openai.com

- IBM — AI governance guidance — ibm.com

Stay connected with news and updates!

Join our mailing list to receive the latest news and updates from our team.